There’s perhaps no more important issue than the protection of children from sexual abuse, and certainly none that is more emotive or politically potent. In any other circumstances, proposing to extend mass surveillance to communications that were previously confidential would be politically near impossible. But by invoking the justification of saving children, achieving the impossible can become a pressing priority.

The UK’s Online Safety Bill, currently in the final stages of negotiation between the House of Commons and the House of Lords, requires platforms to report Child Sexual Abuse and Exploitation content (CSEA content – more on this later) to authorities (Chapter 2, Part 4). It also contains a “spy clause” (Clause 122) under which they can be required by the regulator Ofcom to develop or source technology to enable the proactive detection of such content – even within private communications.

This article won’t be covering the technical difficulties of scanning for CSEA content while protecting users’ privacy, as this has been amply covered elsewhere. Neither will it address the formidable human rights obstacles raised by such a scanning mandate. Similarly, I’ll omit the dangers that weakening communications security could pose to whistle-blowers and dissidents, if scanning technologies were to be misused.

These auxiliary arguments, while valid, skirt the main selling point of the spy clause – that CSEA content (which most people reasonably assume refers to CSAM or child pornography) creates such grave and uniform harms that we ought to give up some privacy in aid of its elimination. I argue that this rests on false assumptions about what CSEA content actually is. In fact the Online Safety Bill defines it so broadly that it has very little to do with saving children at all, and could even put them at risk of greater harm.

What is CSEA content?

The Online Safety Bill doesn’t simply require platforms to scan for known CSAM – which many of them already voluntarily do. Rather, it would require them to detect and report a much broader category of content, including private intimate images that teens take of themselves on their own devices, and even artwork that the government defines as pornographic and obscene.

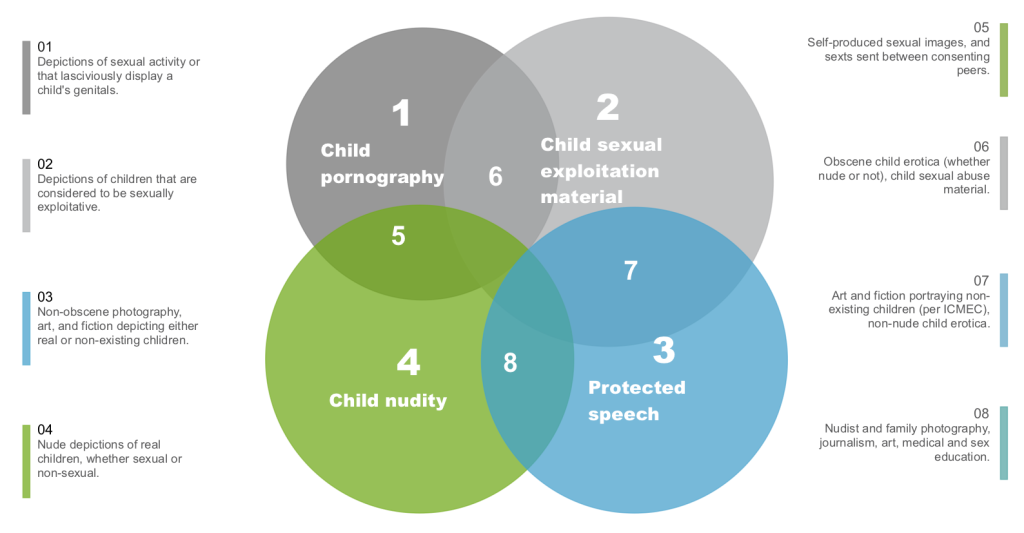

CSAM – child sexual abuse material – is a synonym for what was formerly referred to as, and is still defined in law as, child pornography. That is, depictions of sexual activity or lascivious exhibition of the genitals of a person under 18 years of age. In the diagram above, this is the dark grey circle. Platforms can and do scan for known instances of this content in unencrypted communications using the PhotoDNA hash databases distributed by NCMEC or the Internet Watch Foundation.

But under the Online Safety Bill, the definition of CSEA content is far broader than this. Not only would platforms be required to detect and report CSAM, but also material falling within all four of the circles illustrated above. This includes not only previously unseen and uncategorized photographs such as teen selfies and family bathtime photos that may be produced innocently, but also non-photographic images, such as computer-generated images, cartoons, manga art, and drawings.

Neither of these additional image types warrant the same legal treatment as images of child sexual abuse, and their inclusion under the same CSEA umbrella is cause for concern. As my last article already covered the harms inherent in extending child pornography law to encompass artistic images, I will omit further discussion of that case here to spend some more time on the risks of extending it to apply to AI-detected photographic content.

Self-generated images

While actors in the child protection sector tend to describe CSAM in graphic and even dysphemistic terms that foreground the worst cases of forcible abuse, it’s common ground that the majority of CSAM now being produced and distributed doesn’t fit in that category. About 78% of sexual images of minors detected by analysts online are self-generated images, typically recorded by teens in their bedroom using their own device.

There is a temptation to assume that they would only take such images under pressure of adult grooming, since the only politically palatable narrative on the topic is that children are sexually innocent until they reach 18. But there is a quiet consensus among experts that taking and sharing nude images is normal post-pubescent behavior, that becomes more common as teens grow up and continues into adulthood. While the resulting images are just as illegal and can cause just as much harm when distributed as images of forcible abuse, their provenance has more to do with teenage impulsivity and hormones than with adult predation, and this carries implications for how it is addressed.

Defaulting to a criminal response is not the right solution in these cases. In the United States, children as young as 9 are listed on sex offense registries, some simply for sharing their own intimate photos. Indeed, the most common age to be charged with a sex offense is just 14, and up to a quarter of current sex offense registrants were juveniles when convicted. Although the problem is less stark in the United Kingdom, young people still run the risk of being criminalized for sexting, with only an informal police policy to protect them against such charges.

Adults, too, can be can be implicated by innocently dealing with photos of children under their care. In 2022, Google falsely reported a man to police after an AI scan of his cloud storage found photos of his child’s genitals that he had uploaded for a medical professional to review. In the same year a school administrator faced conviction for storing photos that were removed from students’ phones during his handling of a sexting incident.

Under an Online Safety Bill regime in which platforms are mandated to use AI tools to identify and report all intimate images of suspected minors to authorities, we can only expect the number of false positive reports to balloon. Since authorities are already falling behind in processing their existing large volume of reports of known abuse images, it’s quite unclear that the benefits of an additional influx of AI-identified self-produced images will outweigh its harms.

Balancing parental and platform responsibility

There is an alternative. Rather than entrusting a government agent, whether AI or human, to supervise your child’s private communications, with the ability to copy their intimate images and report them to authorities, it is far safer and more proportionate for caregivers to take on this role.

I’m grateful that when my own family experienced a sexting incident between a child and a friend, this was how the situation was resolved. The intimate image came first to the attention of a parent, who shared the information with the other parents, and the potential harm was averted through a talk with the children involved.

It’s important to note also that by “supervising” I do not refer to the use of spyware for monitoring, nor do I suggest that parents should control children’s login credentials. While some child protection groups do advocate for this, arguing that children have “no right to privacy”, this attitude will only breed distrust and make it more likely that children will hide their activities from parents.

Instead, child development professionals advise laying down ground rules, communicating openly about the opportunities and dangers that their children will encounter online, and taking an authentic interest in what they are doing. In this way, it’s more likely that children who encounter a risky situation will trust their parent to help them through it, rather than punishing them for their curiosity.

What platforms should be doing

Platforms are not the appropriate parties to be snooping on your child’s communications and viewing their camera roll, nor do most of them desire this responsibility. But there are steps that they can take to help keep young users safe from harm while also protecting their privacy.

As a baseline best practice, unencrypted image uploads should be scanned for known CSAM. Beyond this, the platform also has the option to moderate sexual content using AI classifiers, which will also likely uncover any new nude images of minors. These of course must be evaluated by the platform’s trust and safety team, following which any necessary reporting can be conducted.

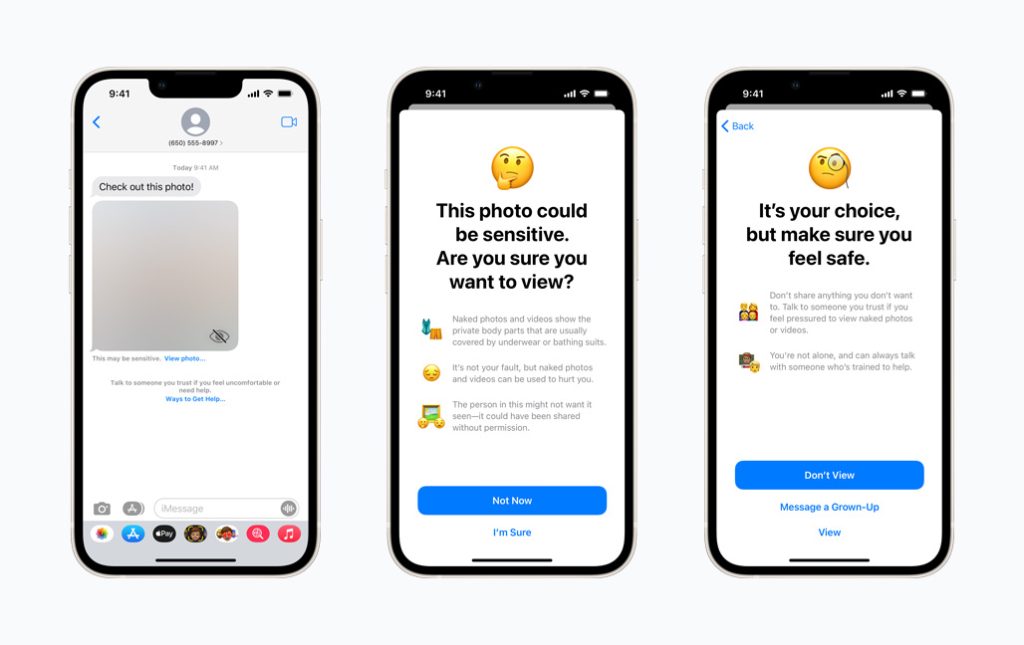

When images are encrypted before reaching cloud storage, options still exist for platforms to act. After shelving an initially ill-advised plan to perform on-device scanning for CSAM, Apple returned with a more privacy-protective option for parents. By turning on a feature in their Family Sharing plan, they can ensure that if a child sends or receives an image that appears to contain nudity (as evaluated by an on-device algorithm), they will receive a warning about the content, along with stigma-free help resources, and a suggestion to contact someone that they trust.

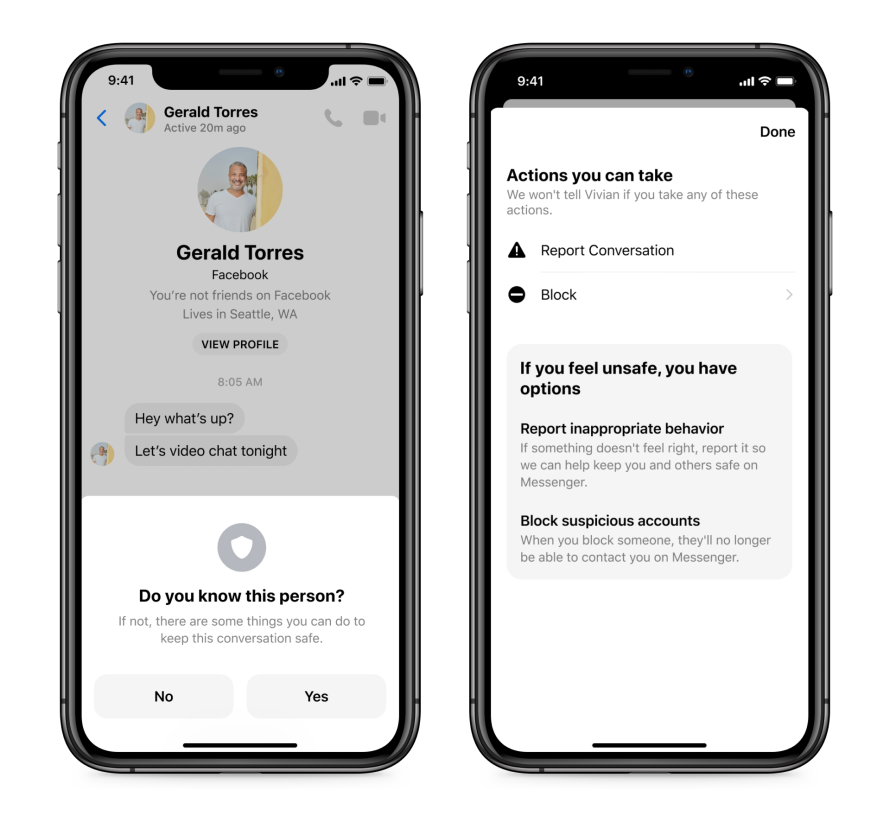

Finally, although platforms may not have the means or desire to snoop on the communications that young users make, there are privacy-protective steps that they can take to prevent children from making suspicious connections with strangers. Facebook, for example, uses machine learning to flag suspicious connections for review, and triggers in-app warnings when adult accounts message unrelated minors. Tiktok disables messaging altogether for minors under 16, Roblox only allows under 13s to message with users on their ‘Friend’ list, and Snapchat disables messaging between adults and minors unless they have a certain number of friends in common.

Tech companies do have a social responsibility to make their products safer by design. In that respect, leaving aside the provisions critiqued above and its age verification mandate (a subject for a separate blog post), the Online Safety Bill isn’t all bad. Even before its passage, it has already influenced other jurisdictions to encourage platforms to proactively assess the risks facing their young users, and to develop systems and processes that address those risks.

What governments ought to be doing for their part is to recognize that it can’t be platforms alone that invest in creating safe environments for children. Expenditure on public education and prevention has long been far outstripped by expenditure on criminal justice responses to sexual violence. It’s time that lawmakers shifted their attention from censorship and surveillance laws and towards measures to support public health and education.

Conclusion

The prospect of platforms being required to roll out compulsory government-approved AI scanning to intercept visual artworks and camera roll photos is a terrifying science fiction scenario that should never become a reality. Governments and the corporate sector alike have a responsibility to uphold human rights standards, and the imperative of protecting children from harm does not negate this responsibility. As such I consider it incumbent upon trust and safety professionals who situate their work within a rights framework to push back against government proposals that flout human rights norms.

In this context, the Online Safety Bill’s proposal that platforms should report a broad range of artistic and self-produced content to law enforcement, even if these are transmitted over encrypted channels, is an obvious and egregious example of government overreach. Governments must never be empowered to conduct general monitoring of communications, and images other than those depicting actual child abuse should never be required to be reported to law enforcement. Instead, society must affirm that it is a parent’s principal responsibility to secure their children’s devices and to be open and honest about how they supervise its use.

While the Online Safety Bill’s intent is to protect children from harm, its wide-reaching provisions, which include scanning for private intimate images and even artwork, dangerously entrust platforms with responsibilities that more properly fall to parents. A more appropriate role for platforms to play is to prioritize safer design, while governments should recognize that measures that support public health and education may do more to advance the cause of child protection than censorship and surveillance laws.