False Positives, Real Harm: When Child Safety Systems Get It Wrong

When Jonas (not his real name) posted a photo of himself in sports clothes to his own Instagram account, the last thing […]

How LGBTQ+ content could become illegal

In 2020, Yulia Tsvetkov, a 26-year-old Russian theater director and artist, found herself under house arrest, facing up to six years in prison. Her crime? Sharing feminist and LGBTQ+-friendly artwork on social media, which authorities branded as “propaganda of non-traditional sexual relations among minors.” Just a year earlier, Michelle, a 53-year-old transgender woman, was sentenced […]

Cybersecurity for Trust and Safety Professionals Handling CSAM

Following five years working in trust and safety in the United States, this year I moved back to Australia. While here, I received a notification from Cloudflare’s excellent CSAM Scanning Tool (reviewed here) that a forum post uploaded to a website of one of my clients had been identified as suspected CSAM (child sexual abuse […]

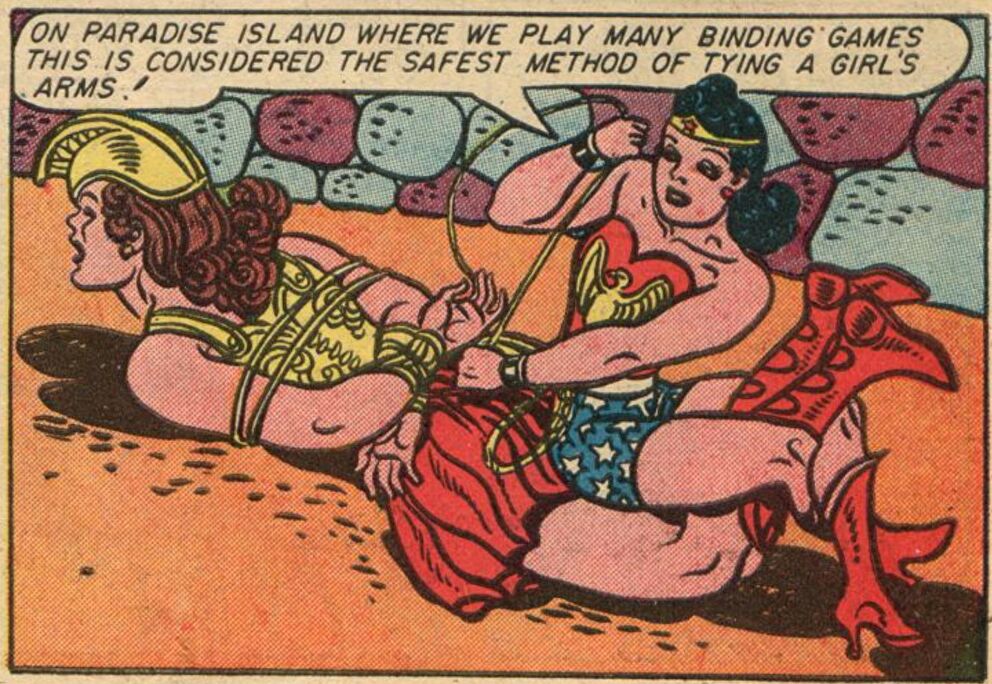

Drawing the Line: Australia’s Misguided War on Comics

Did you know that bringing a comic book into Australia that contains scenes of consensual sexual bondage is illegal? That you could be arrested for doing so, under the same provision of the Customs Act that you’d be arrested for if you brought in real child sexual abuse material (CSAM)? This is just one of […]

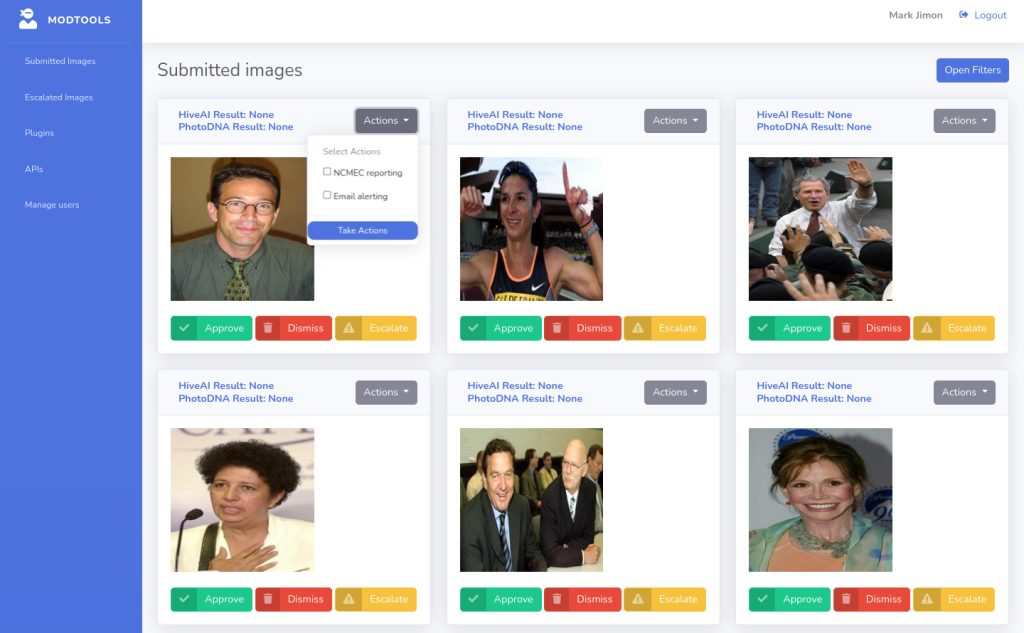

Modtools Image: Open Source Image Moderation

Among the many challenges that image moderators face, one is the lack of open source tooling to support their work. Platforms are forced to either fork out big sums for proprietary image moderation dashboards geared at the enterprise (such as Thorn’s Safer.io), or to reinvent the wheel by creating their own dashboards and their own […]

AI and Victimless Content under Europe’s CSA Regulation

On November 14 the European Parliament presented its compromise take on the European Commission’s controversial draft CSA Regulation. Commissioner Ylva Johannson’s proposal mandates that Internet platforms operating in Europe conduct surveillance of their users. It effectively requires them to use AI classifiers to sift through a vast stream of private communications to identify suspected child […]

Why the EU will Lose Its Battle for Chat Control

Nobody wants to have their private communications vetted by AI robots. That’s the message that rings loud and clear from the backlash against European Commissioner Ylva Johansson’s proposal for a Child Sexual Abuse (CSA) Regulation. Division over this proposal, dubbed “Chat Control 2.0” by its critics, has only deepened following recent revelations about the extent […]

Child Protection or Privacy Invasion? Examining the Online Safety Bill

There’s perhaps no more important issue than the protection of children from sexual abuse, and certainly none that is more emotive or politically potent. In any other circumstances, proposing to extend mass surveillance to communications that were previously confidential would be politically near impossible. But by invoking the justification of saving children, achieving the impossible […]

Generative AI and Children: Prioritizing Harm Prevention

Among many hot policy issues around generative AI, one that has gained increasing attention in recent months is the potential (and, increasingly, actual) use of this technology to create, as the New York Times puts it, “explicit imagery of children who do not exist.” The concerns expressed are mostly pragmatic, not moral; for example the […]

Four Proposed Child Safety Laws, Four Approaches

The U.S. EARN IT Act, Britain’s Online Safety Bill and its stateside counterpart the Kids Online Safety Act, an upcoming European regulation combating child sexual abuse online, and a proposed United Nations convention on cybercrime, are a few current proposals for new legal instruments addressing child safety online at national, regional, and global levels. While […]